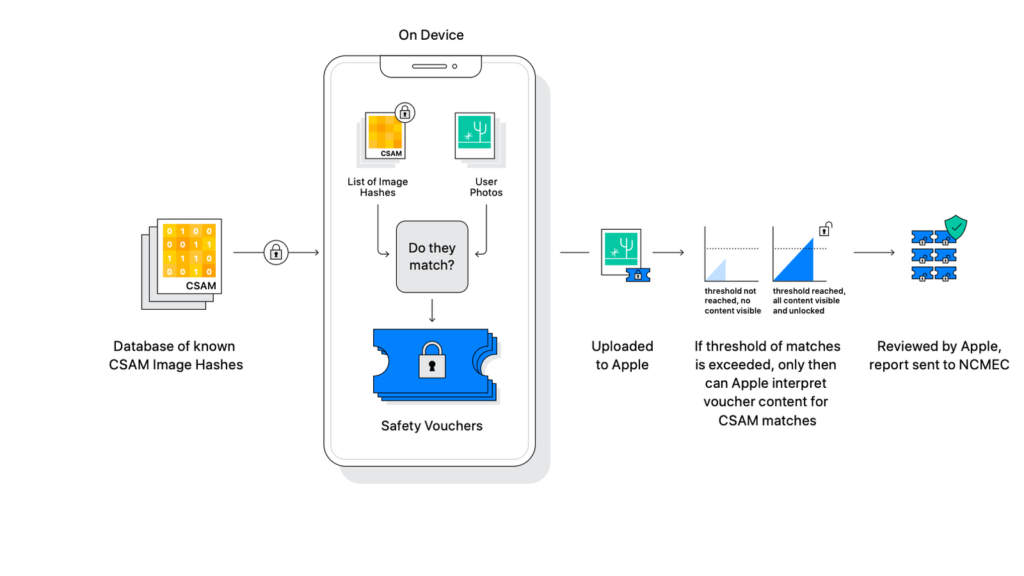

Apple has revealed details about the system that scans users iPhones for Child Sexual Abuse Material (CSAM). Once a device is found to contain CSAM, it will be assessed by a human and get reported to authorities.

CALIFORNIA, UNITED STATES OF AMERICA – The tech industry giant ‘Apple’ recently announced that it will run a scan on all iPhone devices in the United States. The scan will check the devices for any kind of child sexual abuse images. Apple will run this scan through its tool named ‘neuralMatch‘ which will scan every photo that is uploaded to Apple’s cloud.

Once neuralMatch finds a child abuse image, it will notify Apple. Apple would then physically examine the photo. Upon confirming that the photo contains child abuse, it would be reported to the National Center for Missing and Exploited Children’s (NCMEC).

This phone scan by Apple will surely contribute towards eradicating child abuse and pornography. Apple while talking about the scan said, “We want to help protect children from predators who use communication tools to recruit and exploit them, and limit the spread of child sexual abuse material (CSAM). This program is ambitious, and protecting children is an important responsibility. These efforts will evolve and expand over time.”

How Apple neuralMatch works

The neuralMatch tool plays a major role in the scanning of devices. Before photos in iPhones will be uploaded to the cloud, they will be thoroughly scanned by neuralMatch. The neuralMatch tool will check and compare each individual image to that of its database. The database has already been filled with various kinds of child abuse material, so that it can use the data to identify other devices for containing child abuse videos.

The neuralMatch only looks for images that are similar to the one’s in its database. Thus a picture of your own child being reported from your device is highly unlikely.

The CSAM and encrypted messages scan

Apple has previously scanned photos stored in its cloud. However, this is the first time that the company is scanning on devices. Performing on device scans is unusual for most tech companies and Apple is probably amongst the first few companies that are doing this. The initiation of CSAM scan on Apple devices is surely a great initiative towards protecting our children.

John Clark, Chairman of the National Center for Missing and Exploited Children (NCMEC), while speaking about the CSAM scan said, “Apple’s expanded protection for children is a gamechanger. With so many people using Apple products, these new safety measures have lifesaving potential for children.”

Apart from performing a CSAM scan on user devices. Apple to further increase security for children have plans to scan encrypted user messages. Apple will scan messages sent or received on user devices by its AI based tool. The AI based tool while scanning the messages will automatically be able to identify images of sexual nature. This would also allow parents to turn on filters on children’s inboxes. The messages scan will also notify parents for each time their children send or receive an image of sexual nature.

Privacy concerns surrounding the scans

The possibility of Apple scanning the images and encrypted messages have raised concerns amongst many users. Users are saying that with these scans, the company would have access to a large amount of personal user data which they can probably sell. Apple has also been facing pressure from the government to allow increased surveillance on encrypted data. Between all this pressure from government and users, it certainly was difficult for Apple to bring out these security measures.

Also See: Tokyo Olympics recycled the electronic devices for its Medals